|

|||||||||||||||||||||||||||||||||||||

|

|

|||||||||||||||||||||||||||||||||||||

|

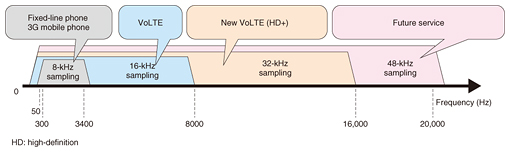

Feature Articles: Basic Research Envisioning Future Communication Vol. 14, No. 11, pp. 16–20, Nov. 2016. https://doi.org/10.53829/ntr201611fa2 Transmission of High-quality Sound via Networks Using Speech/Audio CodecsAbstractThis article describes two recent advances in speech and audio codecs. One is EVS (Enhanced Voice Service), the new standard by 3GPP (3rd Generation Partnership Project) for speech codecs, which is capable of transmitting speech signals, music, and even the ambient sound on the speaker’s side. This codec has been adopted in a new VoLTE (voice over Long-Term Evolution) service with enhanced high-definition voice (HD+), which provides us with clearer and more natural conversations than conventional telephony services such as with fixed-line/land-line and 3G mobile phones. The other is MPEG-4 Audio Lossless Coding (ALS) standardized by the Moving Picture Experts Group (MPEG), which makes it possible to transmit studio-quality audio content to the home. ALS is expected to be used by some broadcasters, including IPTV (Internet protocol television) companies, in their broadcasts in the near future. Keywords: audio/speech coding, data compression, international standards 1. IntroductionMany audio and speech codecs are available, and we can select the most suitable one for different usage scenarios ranging from those requiring reasonable quality with low bit rates to ones demanding original signal quality with high bit rates. With the increases in network capacity that have been achieved, content that requires high bit rates such as 4K television (TV) and high-resolution audio can also be transmitted. However, the first priority is to transmit speech signals in ordinary telephony without congestion. Therefore, speech codecs for telephony should use as low a bit rate as possible. In addition, they must have lower algorithmic delay because the longer the codec delay is, the more difficult it becomes for people to communicate with each other. In contrast, one-way transmission such as broadcasting is less sensitive to delay. Most audio codecs utilize the advantages of longer delay and then efficiently compress audio signals by means of signal processing with sufficient frame length. Moreover, speech codecs use a human phonation model, so they are not suitable for music. When clean speech items are coded by audio codecs at low bit rates, we get the impression that a machine is talking. Speech and audio compression schemes have these kinds of trade-offs. To achieve the best quality of speech and music content with less delay, experts in speech and audio coding around the world have been working together to develop new codecs. Furthermore, lossless coding, which refers to compression without any loss, has been standardized to ensure that the quality of the original content is maintained. This article presents an overview of the 3rd Generation Partnership Project (3GPP) Enhanced Voice Services (EVS) codec, which has been newly standardized for mobile communications, and MPEG-4 Audio Lossless Coding (ALS) for high-resolution audio, which was standardized by the Motion Picture Experts Group (MPEG). 2. 3GPP EVS codec for mobile communications3GPP is the international standardization consortium for mobile communications. It has newly defined EVS speech and audio coding standards for voice over Long-Term Evolution (VoLTE) [1, 2]. Conventional speech coding schemes for mobile phones have been based on code excited linear prediction (CELP). These schemes have utilized a human voice production model and achieved high-quality speech transmission with very low bit rates. EVS consists of newly developed low-delay and low-bit-rate audio coding modules in addition to CELP, and it achieves high-quality transmission of various types of input signals, including speech, audio, background noise, and background music [3, 4]. EVS uses new bandwidth extension technologies to support signals with higher sampling rates up to 48 kHz, in contrast to the narrowband signal (8-kHz sampling rate) of conventional fixed-line/land-line telephones and 3G mobile phones and the wideband signal (16-kHz sampling rate) of VoLTE. Note that the wideband signal is used for AM (amplitude modulation) radio, the super-wideband signal (32-kHz sampling rate) is used for FM (frequency modulation) radio, and the full-band signal (48-kHz sampling rate) is used for digital broadcasting, as shown in Fig. 1.

EVS has been optimized for VoLTE with a frame length of 20 ms and algorithmic delay of 32 ms. It has been designed to minimize perceptual distortion against packet loss, whereas coding schemes for conventional 3G mobile phones were optimized for robustness against bit errors. In addition, EVS covers a wide range of bit rates from 5.9 kbit/s to 128 kbit/s and enables frame-by-frame selection of bit rates. This enables smooth migration from the conventional VoLTE system since EVS has inter-operability with AMR-WB (Adaptive Multi-Rate Wideband). During the standardization process, a huge number of subjective quality evaluations were conducted for various coding conditions, input items, and languages. The results of the evaluations indicated that EVS outperformed conventional speech and audio coding schemes in terms of quality [5]. NTT used a similar procedure to conduct listening tests on Japanese materials [6]. The results of the tests confirmed the superiority of EVS over the coding schemes for conventional mobile communications systems. Note that all EVS development has been carried out by 12 organizations*1 based mainly in Europe, North America, and East Asia, including Japanese companies. EVS has been deployed in commercial services such as VoLTE (HD+) by NTT DOCOMO since the summer of 2016 [7] and by some operators in the USA and Europe as well [8]. We believe that EVS will allow billions of people around the world to enjoy high-quality communication in the near future.

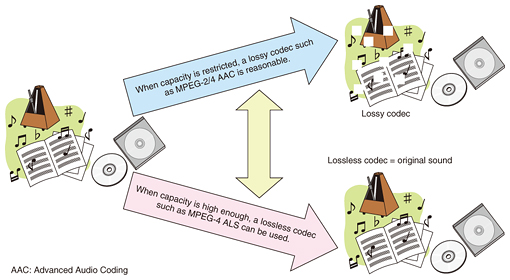

3. MPEG-4 ALS for high-resolution audio transmissionMPEG has standardized many useful audio and video codecs that are commonly used in daily life. The MPEG audio subgroup standardized the lossless compression scheme MPEG-4 ALS, which can perfectly reconstruct original signals. NTT is one of the contributors to this standard [9, 10]. Although the compression ratio depends on the input signal, a file is normally compressed to around 30% to 70% of its original size [11, 12]. High-resolution audio*2 has become more popular recently and enables precisely digitized music. Lossy codecs often cannot convey the fidelity of high-resolution audio, so a lossless codec such as MPEG-4 ALS is necessary. For example, the audio signal in TV broadcasting is usually produced in a studio or broadcasting station in a 48-kHz, 24-bit format, which is referred to as high-resolution audio. Since access to radio waves is limited, we cannot assign a high bit rate to the content, and it is necessary to compress the audio signal at a loss of quality. This lossy codec enables us to enjoy broadcasting content because the codec reduces the bit rate remarkably without any noticeable difference from the original signal. The ultrahigh-definition (or super-high-definition) TV service called 4K/8K TV has now started, and it uses very high bit rates. The audio signal is also expected to use higher bit rates. The Association of Radio Industries and Businesses (ARIB), which defines the standards for radio-wave systems in Japan, standardized ARIB STD-B32 for the 4K/8K TV system. This standard enables the use of MPEG-4 ALS as one of the audio codecs [14]. MPEG-4 ALS can reconstruct in the home music content that was produced in a broadcasting studio—with the quality of the original signal—because the lossless codec guarantees bit-exactness over the entire transmission. We can enjoy high-resolution audio in our living rooms when a sufficient bit rate is assigned to the audio signal (Fig. 2). IPTV (Internet protocol TV) services, which use optical-fiber lines, may introduce MPEG-4 ALS before the radio-wave services do.

In order to support practical deployment of MPEG-4 ALS, NTT has prepared related standards such as MPEG-4 ALS Simple Profile and IEC 61937-10 Edition 2 by the International Electrotechnical Commission (IEC) [15]. MPEG-4 ALS Simple Profile restricts the parameters of the input signal such as the sampling frequency, number of channels, bit depth, and frame size, and also restricts some processing tools and functionalities that require higher computational complexity such as compression for floating-point format signals. ARIB STD-B32 recommends the use of MPEG-4 ALS Simple Profile with LATM/LOAS (Low-overhead Audio Transport Multiplex/Low Overhead Audio Stream)*3 capsuling. To facilitate the connection of a TV and a digital audio device, IEC 61937-10 Edition 2 newly supports the bitstream of MPEG-4 ALS Simple Profile with LATM/LOAS, which can be transmitted via radio waves with 4K/8K video. Then, with 4K/8K TV, we can listen to high-quality music through a high-resolution TV or by using a digital amplifier that can decode MPEG-4 ALS.

4. Future workThe EVS codec enables high-quality communications by means of speech and music even when delay and bit rates are low. MPEG-4 ALS can transmit original audio content losslessly. The delivery of high-quality music has now been achieved, so we will start to consider ways to achieve even more realistic audio transmission. Basic research on interactive communication may achieve a synergistic effect between live venues and reception sites. We will continue to develop speech and audio codec schemes to make timely contributions to new services. References

|

|||||||||||||||||||||||||||||||||||||