|

|||||||||||

|

|

|||||||||||

|

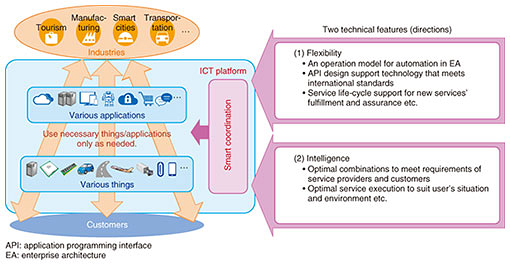

Feature Articles: Network Technology for Digital Society of the Future—Toward Advanced, Smart, and Environmentally Friendly Operations Vol. 17, No. 7, pp. 8–13, July 2019. https://doi.org/10.53829/ntr201907fa1 Technology for Smart Coordination of ICT/Network Resources and ServicesAbstractIn the near future, things and applications will be connected across companies and industries to help people enjoy new innovative services. This article introduces technology for coordinating ICT (information and communication technology)/network resources and services to help us prepare things and applications only as needed. Keywords: orchestration, AI, IoT 1. IntroductionService providers in various industries are developing their own things and applications in order to launch new services powered by the Internet of Things (IoT) and artificial intelligence (AI). By things, we mean all kinds of objects such as sensors, devices, and others covered in the IoT. In the near future, service providers will be combining various things and applications provided across different companies/industries to make their services more innovative. To support those service providers, we are developing a new information and communication technology (ICT) platform for reusable things and applications (Fig. 1). Our aim is to enable service providers to easily select resources only as needed. A coordination mechanism is necessary to do this. We call this smart coordination.

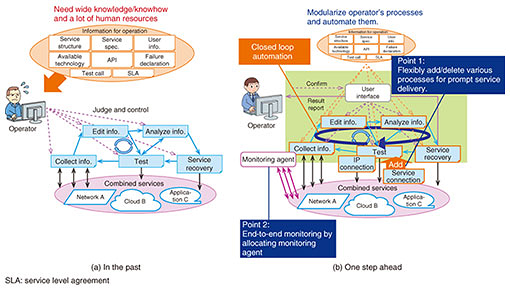

As shown in Fig. 1, the smart coordination platform has two technical features (directions): (1) flexibility and (2) intelligence. For the first feature (flexibility), there will be various use cases when combining services. This refers to when we need flexibility to accommodate a huge number of different cases. The key features are a coordinated operation function and an advanced assurance function. The coordinated operation function provides one-stop service building to easily deploy things and applications. The architecture including this function can handle increasing amounts of data and diverse demands for various services. The latter function (advanced assurance function) will support the coordinated operation function. Operation processes are modularized by this assurance function to flexibly meet users’ needs. The second feature (intelligence) involves applying AI technologies to provide more attractive services. AI can support an optimal combination of services to meet the requirements of large numbers of users. AI also provides an environment in which services are optimally configured and executed to meet each user’s situation. 2. Research and development (R&D) of flexibility and automationOur objective is to develop technology enabling flexibility and automation of functions. In this section, we explain some of the R&D that is being carried out to achieve this. 2.1 Catalog-driven orchestration and operation modelService providers have been taking it upon themselves to combine existing resources/functions in order to build services. However, to accelerate rapid service development (namely cloud-first), such a conventional style should be changed. In fact, resources and functions are publicly available as application programming interfaces (APIs). Service providers can thus combine them to provide a new service (federated service, hereinafter). For service providers or resource providers who are trying to provide a federated service, easy coordination of various resources/functions in the federated service will be necessary to meet users’ needs. Such federated services are built upon many configurations. Therefore, we have made use of a current catalog-driven orchestration technology [1]. This technology simplifies configuration in the form of a catalog and coordinates various resources/functions flexibly. We set public cloud services and mobile services as our target for one-stop configuration using commercially available APIs. We conducted a proof of concept in Las Vegas, USA, in 2018 as the result of our research [1]. Part of the artifact was used for one-stop building of the services environment. Automation of operation will also be necessary for on-demand provision of resources/functions. Even though automation technologies are already widespread in cloud service operation, network service operation is not yet sufficiently automated. However, there are concerns that automation will have an impact on many existing systems in networks. The emergence of virtualization techniques such as software-defined networking to address such concerns is gaining attention because virtualization enables on-demand equipment provision. Thus, our ICT platform uses such a virtualization technique for automation of operations. Regarding automation, we are also studying business processes for service management, order management, and customer management. For automation of various business processes, we need to define an operation model of the business process and the relationships between the operation function APIs. TM Forum [2] has been leveraging the trend in increased use of APIs and redefining business processes, data models, and functions. We are taking such standardization and market trends into consideration as we carry out our research on the operation model. 2.2 API design support technologyAs we study operation function APIs, we are also taking the use of public APIs into consideration. In public APIs, request/response description rules and authentication methods are often different for each service provider. This complexity of API specifications is confusing for service providers. If there are no rules for API descriptions, the API designs can become confusing. Therefore, we are developing API description rules by taking industry standards into account. In addition to such rules, we are developing a function to check whether a designed API conforms to the API description rules. We used the Swagger Specification [3], which is widely used for API specification description, to implement a method of generating a template in which service providers only need to answer some simple questions. 2.3 Advanced assurance functionWhen a service provider creates a federated service, a huge burden is placed on the operation personnel. This is because the operation personnel need to understand the relations of multiple resources/functions that constitute the federated service (Fig. 2(a)). Thus, assurance automation is important. Among various automation technologies, we have paid much attention to closed loop [4]. This refers to a loop of processes that ensures that services follow a specific sequence of steps: (1) collect and manage information on the operation status of wholesale services, → (2) analyze the collected information and make decisions, → (3) control wholesale services, → return to (1) and repeat. A service use case includes such a closed-loop process. Providing a federated service means that many individual event cases are to be supported. For individual event cases, one closed loop might be needed. Consequently, assurance automation for a federated service requires the same number of closed loops as there are event cases. Furthermore, closed loops will also be needed for various service use cases, which would lead to many closed loops. Designing such a huge number of closed loops one by one is too laborious and time-consuming to be feasible. To solve this problem and facilitate new service creation, as shown in Fig. 2(b), we have been researching an advanced assurance function technology that can automate the processes operators use in a federated service. There are two points of this technology.

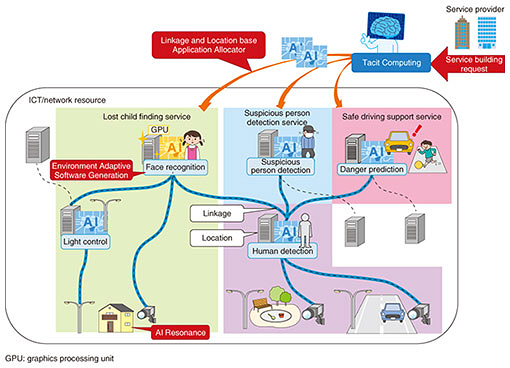

The first point is architecture. The architecture includes modularized assurance functions that autonomously judge and share information among other functions via messaging. For example, this enables us to add new assurance tasks to existing closed loops without designing a new closed loop. It can also support a wide variety of assurance processes of each federated service. The second point is enhancement of monitoring technology. The study of the architecture described above revealed that collecting as much information as possible is most essential for closed loop automation. Information on network/cloud services has often been published via an API recently, but this is not enough information to identify the cause of failures. To solve this problem, the monitoring technology allocates an agent that collects detailed information such as flow information of each user. Therefore, it can add new types of information for analysis and judgement. Thus, we enable end-to-end monitoring of federated services that combine resources/applications of different service providers. 3. R&D on intelligenceWe have been developing Tacit Computing to provide sophisticated services. Tacit Computing is a generic name for IoT and AI service technologies produced by NTT Network Service Systems Laboratories. Certain functions are necessary to build services by combining devices, computers, and software on demand. Such functions need to grasp the status of these components in real time, match them with service requirements, and coordinate them properly. To implement such functions, we have been developing a means of automatic device behavior analysis [5] to automatically identify the types and models of devices in the network. We have also been developing three elemental technologies for building service systems by appropriately combining devices and software (Fig. 3). We introduce these three elemental technologies in this section.

3.1 Linkage and Location base Application AllocatorThe Linkage and Location base Application Allocator is technology for selecting appropriate devices, software, and a network from many candidates in order to build services. To guarantee the quality of service, a large number of services must use devices, computers, and networks at the same time without overloading them. This technology can find what data and processing are common to multiple services. Then it aggregates them to make the overall processing more efficient. Furthermore, it selects the appropriate computer’s location for each processing step based on network bandwidth, transmission delay, and service characteristics. For example, when the allocator executes a suspicious person detection service and a lost child finding service at the same time, it can extract images of people, which is a common processing function of both services. Then the detection is efficiently executed on one computer near the camera. 3.2 AI ResonanceAI Resonance is technology that automatically coordinates multiple devices and software programs upon request. To build a service, it is necessary to choose appropriate settings that suit the type of device, its location, and software. For example, let us assume an outdoor lost child finding service that consists of a camera and a nighttime light. The light intensity should be suitable for video analysis depending on the location and the software. The conventional way is to manually set the appropriate settings. However, we can assume that this will be impossible in the future because the combination of devices and software will be enormous and will dynamically change. To solve this problem, the technology automatically evaluates an operation result of the device and applies the appropriate setting in real time. For example, in a lost child finding service, lights that can make camera images clear are automatically selected from the network and adjusted to the appropriate intensity. The technology eliminates the need for service providers to carry out manual design settings and adjustments and also enables the provision of multi-device services on demand. 3.3 Environment Adaptive Software GenerationEnvironment Adaptive Software Generation is technology that automatically optimizes source code depending on the hardware specifications. Some computers in the network have special hardware such as a graphics processing unit (GPU) or field programmable gate array. To produce sophisticated services, it is necessary to demonstrate high performance regardless of the computer that is selected as the software execution environment. This technology automatically converts the source code into the appropriate code for the execution environment from the viewpoint of performance. As an example, we confirmed that the technology automatically found the part of a code that was suitable for the GPU in video analysis software. The automatically generated program performed about four times faster than the original program for the central processing unit. In the future, we aim to adapt it to various types of hardware such as IoT devices and quantum computers. 4. Future workIn this article, we introduced two features to achieve smart coordination of ICT/network resources and services. The first is the architecture of the coordinated operation function that has flexibility and intelligence. The other is the advanced assurance function. The development of these features contributes to a value-added solution for the ICT platform. In the future, we will also continue to develop Tacit Computing for resource selection and configuration automation. Then we will expand its application to various IoT resources and services. References

Trademark notesAll brand names, product names, and company/organization names that appear in this article are trademarks or registered trademarks of their respective owners. |

|||||||||||