|

|||||||||||||||||||||||||

|

|

|||||||||||||||||||||||||

|

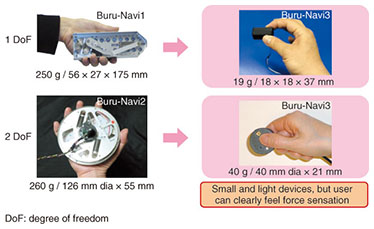

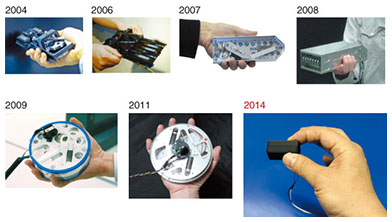

Feature Articles: New Developments in Communication Science Vol. 12, No. 11, pp. 20–25, Nov. 2014. https://doi.org/10.53829/ntr201411fa4 Buru-Navi3 Gives You a Feeling of Being PulledAbstractWe have been investigating a way to create a sensation of being pulled even when there is no actual physical pulling. We have found through our studies of the characteristics of human force perception that asymmetric oscillation can create this sensation. Prototype devices to achieve this have been developed, although some early prototypes were as bulky as the receiver of a conventional fixed-line phone and were therefore not suitable for mobile use. We have recently succeeded in creating a thumb-sized prototype device containing a linear actuator that can effectively provide a pulling sensation. We achieved this by focusing on the high tactile acuity of human fingers. This article introduces the innovative prototype and its possible applications. Keywords: sensory illusion, haptics, force sensation 1. IntroductionWhat if a compass (or compass application in a mobile phone) actually pulled your hand in a certain direction instead of just showing the direction? The concept of force stimulation can be likened to the example of a parent leading a child by the hand, and it has the potential to be more intuitive and expressive than cutaneous stimulation, which refers to stimulation of nerves via skin contact. Unfortunately, the conventional force display systems are not suitable for mobile devices since the generation of low-frequency force requires a fixed mechanical ground, which mobile devices lack. Some mobile torque feedback devices that do not require grounding have also been proposed, but they produce neither a constant nor translational force; that is, they can generate only short-term rotational force. We have been researching a way to create a force sensation in mobile devices and have developed a force display device called Buru-Navi, which generates a sensation of being pulled or pushed by exploiting the characteristics of human perception [1, 2]. Our objective is not to create a physical tugging force, but to create an illusory sensation of being pulled or pushed. We can do this by placing some kind of mass into a box and moving the mass back and forth with different acceleration patterns for the two directions. This asymmetric motion generates a brief and strong force in a desired direction (e.g., forward) and a weaker one over a longer period of time in the reverse direction (e.g., backward). Note, however, that the average magnitudes of the two forces are identical. Since the magnitude of the longer and weaker force is much smaller than the other force, people who hold the box feel as if they are being pulled in the desired direction rather than feeling only a discrete vibrating sensation that is common in conventional mobile devices today. 2. Miniaturization of force deviceOver the past several years, we have been refining a method to create a sensory illusion of being pulled and have developed various prototypes to create such a sensation [3, 4]. Our previous prototypes were able to create a clear force illusion, but they were based on mechanical linkages, so they were generally too large and heavy to embed in mobile equipment. Thus, we have selected a linearly vibrating actuator*1, which does not require any mechanism to convert from rotational motion to translational motion, and used it in a thumb-sized prototype (Figs. 1 and 2). However, generally speaking, the power-to-weight of the actuator drastically decreases as the size of the actuator decreases, leading to an insufficient force illusion.

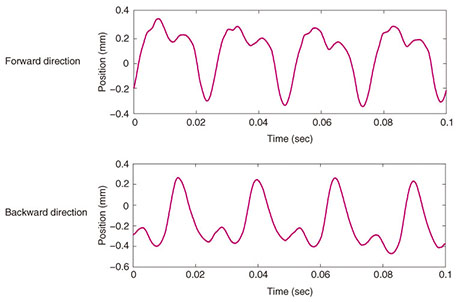

Accordingly, we have redesigned and optimized an asymmetric oscillation pattern by working to achieve the best combination of the amplitude of the small actuator and the sensitivity of the human fingerpad. Specifically, we have used a higher frequency range than that used before; the motion patterns of the actuator for forward and backward directions are shown in Fig. 3.

There are four tactile mechanoreceptors in the human fingerpad; of these, the Pacinian corpuscle is most sensitive to high temporal frequency vibrations (100–300 Hz) in the normal direction [5], but seems to have nearly the same sensitivity in sliding directions tangential to the skin [6, 7]. In contrast, SA1 and RA1 fibers, whose signals come mainly from Merkel and Meissner corpuscles, respectively, are sensitive to lower frequency (< 100 Hz) vibrations, and some of them can clearly code—or in other words, detect—the sliding or tangential force direction [6]. Thus, asymmetrically oscillating stimuli that contain the frequency components that stimulate these receptors will induce a clearer force sensation of being pulled. As a result, we have succeeded in creating a sensation of being pulled with a thumb-sized force display, and the sensation is just as clear and strong as it was with our previous prototypes.

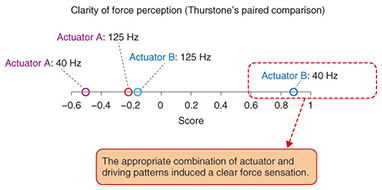

3. Comparison of clarity of force illusionIt is of no value to us if the effect of force illusion decreases when the size and weight of the device are decreased. To determine an effective profile of asymmetric acceleration to induce a clear force sensation, we conducted an experiment to compare the clarity of perceived force sensation between actuators and asymmetric driving patterns of an input signal in order to find the best combination of these for a miniaturized force display. We selected two kinds of linear actuators; each actuator was covered with a cylinder that was 40 mm in diameter and 17 mm thick and made of ABS (acrylonitrile butadiene styrene) resin. A piece of sandpaper (#1000 grit) was pasted on its surface to control the surface roughness. Two driving patterns of 40 Hz (pulse alternation 7:18 ms) and 125 Hz (pulse alternation 2:6 ms) were used as optimal inputs to each actuator, which gave us four stimulus conditions (two actuators × two driving patterns). In the experiment, each participant was asked which stimulus condition gave a stronger sensation of being pulled/pushed between two of the four conditions. Each participant made six paired comparisons*2. All participants reported that they felt a strong force sensation of being pulled/pushed with a specific condition. There were no significant differences between the tendencies of the participants’ evaluations (χ2(40) = 25.5, p = 0.96, not significant). Although a paired comparison provides ordinal data, the data can be converted into an interval scale by using the order statistics (Thurstone’s method). The values of the clarity of the force sensation in the interval scale are shown in Fig. 4. In this scale, the four conditions were ordered along a continuum to represent the degree of difference of the clarity of perceived force. The figure shows that an acceleration pattern created by the combination of a specific actuator (actuator B) and a specific driving pattern was highly likely to be judged to create a clear force sensation. This suggests that a clear force sensation can be created if we select the appropriate asymmetric acceleration pattern of oscillation.

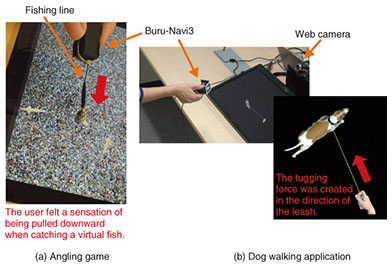

4. ApplicationsApplications using Buru-Navi3 include a pedestrian navigation system and a new haptic experience in gaming. At NTT Communication Science Laboratories Open House 2014, we presented an angling game application and a virtual dog walking application. In the angling game, users can feel a virtual fish nibbling and pulling the hook. A fishing line with a small weight at its tip was attached to Buru-Navi3 (Fig. 5(a)). When the weight approaches the mouth of the virtual fish, the fish bites it. When the fish is caught, Buru-Navi3 generates the sensation of the fish pulling the hook. Many participants seemed to enjoy the novel sensation produced by the device, and some reported to be “addicted” to it. In the dog walking application (Fig. 5(b)), the participants felt as if a virtual dog that walks around was pulling on the leash. We used the 2-DoF*3 version of Buru-Navi3 in combination with a motion tracking system. The amplitude and direction of the force sensation were altered dynamically according to the positions of the user’s hand and the virtual dog.

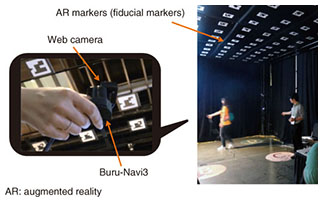

In addition, we recently presented a pedestrian navigation system at the ACM SIGGRAPH*4 2014 Emerging Technologies conference [8]. We have implemented a tracking system based on fiducial markers since marker-based tracking provides repeatable, robust, and reliable performance. We hung some polystyrene boards containing original printed fiducial markers on the ceiling. Users held a web camera so it was facing the ceiling and thus able to capture the markers. The user’s position and orientation were estimated from each marker’s unique ID and its distorted form in the captured image. A photo of the pedestrian navigation system using Buru-Navi3 is shown in Fig. 6.

It is worth mentioning that in the former study, we conducted an experiment to examine whether Buru-Navi2, the previous prototype, allowed people with visual impairments to walk safely along a predefined route at their usual walking pace without any previous training. We found that by delivering simple navigational information, Buru-Navi2 enabled more than 90% of participants with visual impairment to walk along a predefined route through a maze without any prior training [4]. This finding implies that the 2-DoF version of Buru-Navi3 will also be capable of leading people along a route—even people who might ordinarily have difficulty finding their way—just as well as Buru-Navi2 did since the fundamentals underlying these systems are essentially identical.

5. Conclusion and future workBuru-Navi3 is the result of our efforts to investigate the mechanism underlying human perception and motion. In the development process, we have overcome issues such as a trade-off between miniaturization of the device and the effect of illusory sensations. Miniaturized devices are advantageous in that they can be used with mobile and wearable appliances. Such technology will open the door to providing rich haptic experiences in mobile applications, which is a relatively new field. The characteristics of human perception have been considered deeply in developing conventional video and audio systems. In the future, various kinds of sensory information—not only for perception but also for action—will be considered in order to develop sophisticated interactive systems. Thus, we will continue to focus not only on understanding the mechanisms of sensorimotor processing in the brain, but also on finding prerequisites for developing interactive and natural user-friendly interfaces. References

|

|||||||||||||||||||||||||