|

|||||||||||||||||||||||||||||||

|

|

|||||||||||||||||||||||||||||||

|

Feature Articles: Communication Science as a Compass for the Future Vol. 13, No. 11, pp. 25–30, Nov. 2015. https://doi.org/10.53829/ntr201511fa5 Yu bi Yomu: A New Text Display System Using Tracing BehaviorAbstractNTT Communication Science Laboratories is researching a text display system called Yu bi Yomu, in which the appearance of text changes dynamically in response to the user’s finger-tracing behavior. Research on digital text display has so far centered on discussions on how to achieve the feeling of using paper. However, digital text display has the potential to surpass attempts at simply imitating the paper medium by exploiting digital features in order to bring about major changes in the way that reading itself is performed. This article provides an overview of the Yu bi Yomu system and introduces the advantages of using this method. Keywords: text display technology, interactive interface, finger-tracing reading 1. IntroductionPaper has been the main medium for presenting text for a very long time. However, recent progress in digital media technology is rapidly increasing the opportunities for displaying text on computer displays (digital text displays) instead of printing text on paper. Digital text display can do more than just simplify the handling of huge amounts of textual information. It also has the potential to bring about major changes in the way that reading itself is performed, that is, in reading behavior, by exploiting the features of digital devices. In principal, the content of text presented on paper does not change. A digital device, however, enables the dynamic presentation of information [1–4]. In this regard, the market for devices equipped with a touch-response function such as tablet computers and smartphones has been growing rapidly in recent years. A key feature of these digital devices is the adoption of an interface by which the user can directly touch and manipulate what is being displayed. Compared to keyboard or mouse operations, using one’s fingers or a touch pen is highly intuitive and interactive. Such methods of direct manipulation have also come to be used for perusing text documents, but most of those methods have been focused on reproducing the sense of manipulating media having fixed text, and the paper medium in particular. The benefits of performing direct operations on characters and text as symbols that convey information have hardly been considered to date. NTT Communication Science Laboratories is researching a text display system called Yu bi Yomu [5, 6] that makes use of the dynamic-display and touch-based direct-manipulation features of tablet devices (Fig. 1). This system displays text very faintly when the reader is not interacting with the display. However, if the reader begins to trace the characters of that text with his or her finger, the system will gradually increase the contrast of those characters, which is known as fade-in. At this time, the high-contrast characters can be left in that state, but it is also possible to configure the settings so the characters gradually fade out after a specific length of time. In either case, the reader proceeds to read the text while the characters of that text become increasingly visible by finger tracing. In addition, dynamic text display enables intuitive manipulation of such features of reading text as emotional response and intonation that are difficult for static text display to explicitly represent.

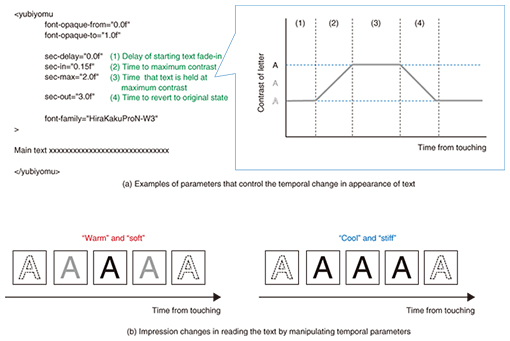

2. Basic structure of software using the Yu bi Yomu systemWe are currently implementing the Yu bi Yomu system as software running on commercially available tablet computers. We are also developing software for a variety of devices running under iOS*1, Android*2 OS (operating system), Mac OS*3, and Windows*4 personal computers (touch-panel displays required), although functions will differ somewhat among these devices. This software assumes the preparation of text files that use a special format to manipulate the text that the user would like to display with the Yu bi Yomu system (Fig. 2(a)). This format is a type of Extensible Markup Language (XML)*5 and describes the text to be displayed and symbols called tags in much the same way that web pages are prepared. Preparing text files in this format (Yu bi Yomu files) enables the user to change the text to be displayed as well as the temporal profile of elements such as the text font and font size without having to modify the software program. For example, specifying the following four parameters controls the temporal change in the appearance of text when finger tracing (Fig. 2(a)). (1) Time from user touching the screen to start of text fade-in (2) Time from start of text fade-in to maximum contrast (3) Time that text is held at maximum contrast (4) Time from start of text fade-out back to original state We found that manipulating these parameters describing the temporal profile of text display can change the reader’s overall impression of reading a document, as indicated by the words warm and soft, and cool and stiff in Fig. 2(b) [6].

The Yu bi Yomu system can also record the user’s tracing behavior. The user can start and stop this recording by pressing a software button and can make use of the recorded tracing behavior without having to do any programming or editing. In particular, the data obtained by recording tracing behavior can be used to prepare animation in which text automatically rises up based on a time series of finger-tracing actions. Additionally, recorded data may be saved in a tab-separated text file and exported to spreadsheet software such as Excel*6 to enable reading behavior to be analyzed for educational purposes.

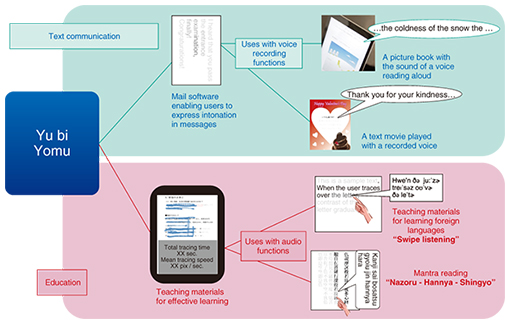

3. Usage scenarios for the Yu bi Yomu systemThe Yu bi Yomu system can be applied to the text-communication and education fields (Fig. 3).

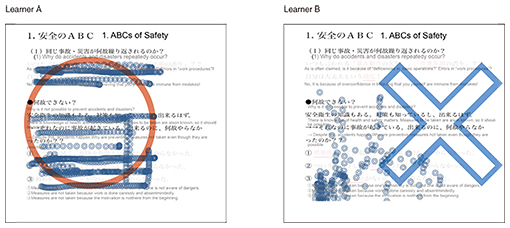

3.1 Application to text communicationWe consider that incorporating software using the Yu bi Yomu system in text-communication tools such as email and messaging apps can facilitate smooth communication. For example, text-based animation using tracing data can enable a user to add nuance and subtle emotions to short messages. Animated text using tracing data can also be combined with a function for recording audio during finger tracing so that an animated message can be created that combines speech and moving text. Animation in which text automatically flows on a display has come to be used in many everyday scenarios such as television captions, karaoke systems, and electronic billboards. Experts have traditionally prepared such animation using computers and specialized software, but the Yu bi Yomu system makes it easy for even general users to create animated text for smartphones and other devices. Furthermore, in most animated text prepared by computer, the speed at which text appears is fixed. In contrast, animated text based on human tracing includes text whose appearance accelerates and decelerates in an uneven manner. Such complex displays of text can express intonation in a message and convey the sender’s individuality and presence [7, 8], which cannot easily be achieved with traditional computer systems. 3.2 Application to educationWe consider that the Yu bi Yomu system could also be applied with good effect in the field of education. Compared to silent reading, reading while tracing accompanies a physical action (finger tracing) that can be observed by other people. Moreover, as the position of words being read basically agrees with the position of the finger while tracing, examining a record of finger tracing makes it easy to learn how that person is progressing in reading that text. In this way, the Yu bi Yomu system makes it relatively simple to visualize the way in which someone reads text. For example, in a classroom setting, using the Yu bi Yomu system to prepare teaching material makes it easy to visualize interactive behavior while reading, which has the potential to improve the quality of instruction. We conducted an experiment in collaboration with NTT-ME in the use of Yu bi Yomu teaching material. In this experiment, we introduced teaching material using the Yu bi Yomu system in classroom-based training targeting ordinary employees, and we compared the results with instruction using the same material but displayed statically in the usual way on a tablet device [9]. We found that the average score in a memory test taken after training was about 10% higher in the group receiving instruction using Yu bi Yomu material than in the group using material presented in the conventional way. There are a number of possibilities as to why test results improved in this way, and further research is needed to arrive at a detailed explanation. At the least, however, the results of this experiment have shown that the quality of instruction can be improved by using the Yu bi Yomu system for certain types of teaching material and classroom settings. Furthermore, on converting the tracing data obtained from learners who used Yu bi Yomu teaching materials into graphic images and examining the results, it was found that the way in which reading was approached could differ greatly between people. Examples of finger tracing between two learners—one who did well in the recollection test and one who did poorly—are shown in Fig. 4. It is obvious which of the two approached the material in a more serious manner.

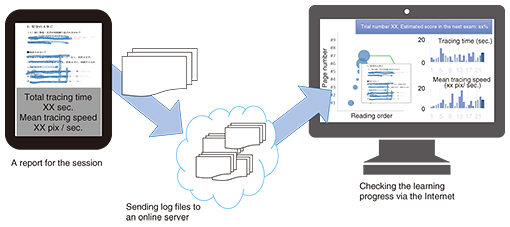

Text display using the Yu bi Yomu system can also be combined with a text-to-speech function to prepare teaching material on foreign languages and ancient texts such as sutras that may be difficult to read on one’s own. For example, software to read text out loud while finger tracing could be created by preparing a text-to-speech file beforehand and changing the generated speech in unison with the text being traced. Likewise, educational software with a text-to-speech function could provide a sense of self-improvement as one learns how to read text that one could not otherwise read on one’s own. This approach holds the possibility of keeping the learner motivated and focused with a desire to continue learning. 4. Future outlookIncorporation of the Yu bi Yomu system introduced in this article in the software used in popular digital devices such as smartphones and tablets is expected to expand the communication functions provided by those devices. Furthermore, combining the software with a text-to-speech function should make it applicable to picture books read out loud by a familiar voice. In addition, collecting a large amount of tracing data over time may make it possible to uncover key relationships between tracing and learning conditions. If so, we can expect the Yu bi Yomu system to be useful in large-scale learning systems (Fig. 5).

The Yu bi Yomu system focuses on the temporal and interactive characteristics associated with the digital display of information. It is an attempt at incorporating the temporal expression of emotional response and intonation common to the spoken language in textual expression using tracing behavior. In this research, it is not our aim to reproduce on digital devices textual expression as achieved on the medium of paper. Rather, we seek to exploit the special features and formats of digital devices to open up new possibilities in communication. References

|

|||||||||||||||||||||||||||||||