|

|||||||||||||

|

|

|||||||||||||

|

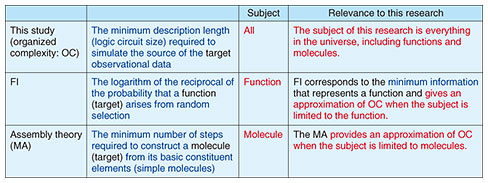

Front-line Researchers  The Law of Increasing Complexity Was Formulated, Informed by Cryptographic MethodologiesAbstractAs a world-renowned researcher studying cryptography and NTT Fellow at NTT Social Informatics Laboratories, Dr. Tatsuaki Okamoto has formulated the problem of “organized complexity” (a concept he has long contemplated) as “the law of increasing complexity,” informed by cryptographic methodologies. This law provides a unified explanation of the phenomenon of increasing complexity over a long time in subjects such as the universe, living organisms, and human societies. In this interview, we asked him about his thinking behind the formulation of the law of increasing complexity, specific examples demonstrating the validity of the law, and the future direction that the Earth and human society might take if the law is followed. Keywords: cryptography, organized complexity, law of increasing complexity What is the phenomenon of increasing complexity?—It has been three years since your last interview. Would you tell us about the progress of your research? In my previous interview, I defined “organized complexity.” In this interview, in which I refer to organized complexity simply as “complexity” [1], I would like to talk about “the law of increasing complexity” [2], which I have formulated to describe how complexity concerning many and various subjects increases over time. I’ll first describe specific subjects to which this law applies and the fields in which it can be useful then give examples of its applications [2, 3]. The idea that the complexity of everything in this world increases over time can be explained by broadly dividing complexity into two types. One type is disorganized, so it is known as disorganized complexity, which can also be called “randomness.” The other type is organized complexity, which is the main theme of my research. The phenomenon by which the former (randomness) increases over time in isolated systems was formulated in the 19th century as the law of increasing entropy, which is known as the second law of thermodynamics. In contrast, the latter (organized complexity) describes systems in which many elements are organically related. Such systems include open systems (subsystems of the universe, such as the Earth) in which energy and matter are exchanged. Physics had hardly dealt with organized complexity, which is more closely related to real-world fields involving humans, such as biology and the humanities. Hardly any attempt has been made to formulate organized complexity in the manner of physics. To give a real-world example of organized complexity, I’ll first give a physics-based example, the origin of the universe. It is currently believed that the universe was born in the Big Bang approximately 13.8 billion years ago. Immediately after the Big Bang, elementary particles appeared and gathered to form atoms, which then gathered further to form molecules. After starting from simple elementary particles, the universe thus has a history of gradually forming more complex matter. In this formation process, numerous hydrogen atoms gathered to form stars such as the Sun, and heavier atoms originating from stars gathered to form planets, which in turn formed into galaxies, galaxy clusters, and superclusters. When we observe the universe, we can see the phenomenon of small matter gradually forming large-scale structures and how these structures change shape over time, in other words, the phenomenon of increasing organized complexity. I’ll next give an example in biology. The Earth was formed about 4.6 billion years ago, and bacteria (prokaryotes) appeared as the first life on Earth about 4 billion years ago. Single-celled eukaryotes were then formed, and multicellular organisms were formed when the eukaryotes aggregated. These organisms evolved and organized themselves as a collection of organisms called a “biosphere.” Today, the biosphere is becoming more complex. Our species, Homo sapiens, appeared on Earth more than 200,000 years ago. After their emergence, they first gathered in very small groups, but they then formed organizations, created societies, developed civilizations, and evolved into the highly advanced and complex human societies we have today. I have long wondered whether it is possible to formulate laws governing this increasing organized complexity in the manner of those governing increasing entropy. Few researchers had considered this question, but I have noticed some movement toward answering that question. One example of such movement is the concept of “functional information (FI),” which is defined as the logarithm of the reciprocal of the probability that a certain function will occur. It might be easier to understand if we say that the lower the probability of occurrence of a function, the higher the complexity of the function. This concept was defined by biology researchers and represents, for example, the degree to which ribonucleic acid (RNA) has a rarer sequence compared with RNA, the sequence of which is completely random [4]. Biologists have also collaborated with mineralogists to investigate, from the perspective of FI, the fact that minerals on Earth have become complex and evolved for various reasons [5]. The next example is “assembly theory,” from which the molecular assembly index (MA) is derived [6]. Assembly theory is based on the idea that more-complex molecules are created by starting with simple molecules (such as hydrogen and oxygen) and combining them. By observing and analyzing light spectra from planets, researchers identify the molecules present in a certain planet and analyze how much effort is involved in the process of creating those molecules. In other words, they are attempting to determine the level of organized complexity of a molecule from the minimum number of steps required to create it. As these two examples show, research on organized complexity, which is a topic I’ve been interested in for many years, is gradually progressing. —Would you explain the law of increasing complexity? The above-mentioned studies on organized complexity [5, 6] established theories limited to specific subjects such as organisms and molecules. On the contrary, the concept of complexity that I have defined in my research is more general and can encompass all kinds of matter, including the organisms and molecules that those studies focused on. To measure any subject in terms of complexity, we need to observe it by using various methods. Observation means seeing, touching, and feeling. We observe all kinds of objects in the world—sometimes directly with our eyes and sometimes via microscopes, telescopes, and various large-scale observation devices. When we touch something with our hands, the sensors in our hands react and send electrical signals to our brains. In short, we observe objects in various ways and recognize their existence through these observations. Observational data always have an information source behind them, which can be represented as a probability distribution, so complexity can be defined in relation to probability distributions. My approach is universal; that is, by equating the minimum amount of information required to describe the source of the observed subject (the probability distribution) with the minimum circuit size required to simulate it by using logic circuits, it is theoretically possible to define any subject. However, even if the smallest descriptive quantity can be defined, it is not easy to determine it concretely, so it is useful to have an approximation method that is appropriate for the observed subject. In that sense, when the observed subject is limited to the functions of living organisms, FI (minimum amount of information representing functional information) provides a good approximation of the complexity. When the subject of observation is a molecule, although it is difficult to precisely define the complexity of a molecular system, the MA provides a good approximation of the complexity as it is calculated from the minimum number of steps required to build up the system from simple molecules. In this manner, the complexity I proposed can apply to all objects, but previous studies provide approximations for individual subjects (see Table 1).

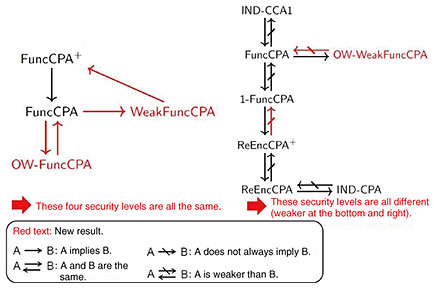

I believe that formulating the law of increasing complexity is worthwhile because it provides the first solution to a problem that someone would inevitably have to tackle. —Complexity is increasing in every aspect of life on Earth. After examining the systems to which the law of increasing complexity applies, I proposed the hypothesis that it emerges in dynamic systems (non-equilibrium systems) with abundant free energy flows. This hypothesis is consistent with our intuition that increasing complexity applies to the observable universe, which includes the biosphere and human society on Earth. I’ll give some examples of applications of the law of increasing complexity. The world of physics is currently governed by constants such as the speed of light, gravitational constant, elementary charge, and Planck’s constant, but no one knows why these constants have certain values. We can only say that these values just happen to be. However, it is understood that if the values were to deviate even slightly, life, including humans, could not have come into existence. For example, if the physical constants that define nuclear force and electromagnetic force were to deviate even slightly, the binding forces between constituent elements within the atomic nucleus would change, and complex atoms would not be formed. As a result, complex things, including humans, would not exist. In other words, the physical constants of the universe are very delicately balanced and adjusted to values that are conducive for creating life on Earth, and adjusting the balance is called “fine-tuning.” This fine-tuning is a very strange fact, and various scholars have proposed theories to explain it, and the most widely supported theory is called the “anthropic principle.” However, this principle is a tautological argument that states, “If a person observing the laws of physics exists, the observer must be consistent with the laws of physics of the universe; otherwise, they cannot exist.” The principle that the physical constants, such as those I mentioned above, are fine-tuned for the existence of humans is extremely subjective, and this is often criticized. However, with my hypothesis, the reality of fine-tuning can be explained reasonably. That is, physical constants of the universe are fine-tuned for the emergence of the law of increasing complexity. Since complexity is described purely mathematically, it is unrelated to human existence as prescribed in the anthropic principle, so everything can be discussed objectively and theoretically. If we look at the biosphere, many organisms, including humans, have sexes and lifespans. While various studies have been conducted to answer the question of why sexes and lifespans arose, it must be said that unraveling their fundamental origins remains quite difficult. I believe that answering such questions by arguing that the law of increasing complexity emerges, that is, that the existence of sexes and lifespans in the biosphere increases complexity, offers a clear and convincing explanation. Let us look at the existence of sexes in more detail. If sexes did not exist, and no biological differences existed between the two parent organisms, each individual organism would have only one structure. However, as sexes exist, a species has individuals with two different biological structures, and that condition leads to diverse interactions between males and females in accordance with those structural differences. In sexually reproducing organisms, offspring combine the genes of both parents, and that combination results in greater diversity in the offspring’s generation than in the parent’s generation. If the lifespan of an organism were extremely long, spanning dozens of generations (effectively meaning no lifespan), the older generation, which holds a competitive advantage, would dominate, and generational turnover would not proceed smoothly. On the contrary, lifespan is limited to a few generations; thus, generational turnover proceeds smoothly in a manner that increases diversification. Thus, the distinction between males and females and the existence of lifespans in organisms can both be explained as phenomena that arose in accordance with the law of increasing complexity. So how are social norms in human society explained by the law of increasing complexity? For example, a common social norm encourages altruistic behavior by stating that you should do to others as you would have them do to you. According to the law of increasing complexity, the existence of such social norms increases the complexity of human society. Without altruistic social norms, people in need of help would not receive it, and systems to protect vulnerable members of society would not exist, which would damage individuals and society and decrease diversity. On the contrary, if such social norms exist, actions and institutions that protect individuals and society will be promoted, and diversity will increase. This situation can also be explained as a phenomenon that arises in accordance with the law of increasing complexity. Humanity will increase its complexity and evolve into “augmented humans”—According to your proposed law of increasing complexity, what kind of world do you imagine human society will become? Do you think people should be concerned about the rapid development of artificial intelligence? Artificial intelligence (AI) is a field in which I’m also interested in. However, when considering the complexity of human society, I think the most-important factor is the relationship between people. Human society has become complex through the complexity of such relationships. For example, primitive societies were small groups of only a few people, but as agriculture developed, they became larger, and thanks to the advent of the Internet, human relationships have become even more complex. It is often said that AI will take away people’s jobs, and I think this statement holds some truth. However, I believe that AI will be a valuable tool that will help human relationships evolve and become more complex. Humans are creatures that have “evolved” themselves by, for example, inventing various tools. In a sense, tools extend human capabilities. Given that fact, we can suppose that humans are not so much self-contained beings; rather, we are creatures that use tools, such as computers, as extensions of our bodies. Even memories stored in the brain are recorded in external media, such as books and the cloud, and cars, bulldozers, cranes, and the like can be seen as extensions of human limbs. We are no longer simply human beings; that is to say, we have evolved by extending various functions externally. Seeing AI in a similar light, I consider it an extension of the human brain. If AI is to be properly used in future human societies, it will likely be for time-consuming, intellectual tasks. In the programming field, such tasks might include tedious coding. If AI takes over those tasks, hierarchical relationships and superior-subordinate relationships in the workplace will improve and more-equal human relationships will be formed at a higher level; thus, a more-complex human society with more advanced “augmented humans” will emerge. With these considerations in mind, I predict that even-more-capable humans will build societies with even-more-complex relationships at a higher level. I believe that humans are the only living organisms that have raised the complexity of their own biological population to today’s extent in a manner that gives us a unique existence within the biosphere. A recent example of humanity taking on a bold challenge is responding to asteroid impacts. In prehistoric times, such an impact caused the extinction of dinosaurs and many other species on Earth. With this consequence in mind, humanity now is observing the movements of all asteroids, investigating potential candidates for the next collision with Earth, and even experimenting with how to respond if the probability of a collision increases in a few decades. A few years ago, NASA conducted an experiment to alter the orbit of an asteroid by crashing a probe into it. When viewed from the outside, the Earth appears to be a single living organism. For example, the Earth defends itself by crashing a flying object into an enemy charging towards it. It is acting like a living organism protecting itself from external threats. As a unique species with an increased complexity, humans have somehow taken on the role of protecting the entire Earth. Humans are working to lower temperatures and address the problem of global warming. These efforts will undoubtedly evolve over the next 100 years or more, and in several hundred years, humans may even have a higher degree of control over the Earth’s environment. In other words, while humans are a unique species that emerged amid the increasing complexity of the biosphere, just as the brain emerged as organisms evolved (became more complex), perhaps human society will take on a kind of brain function for the sake of the Earth (biosphere) in a manner akin to taking on the role of the attacker to protect the planet or acting to control its environment. Elon Musk has been pursuing plans to colonize Mars, and I have a similar idea in line with my own thinking. That is, I wonder if humans will one day venture into space and try to transplant Earth’s natural environment on another planet. Just as living organisms reproduce and perpetuate life on Earth, we humans might try to leave behind descendants of Earth’s biosphere (life) throughout space. Humans have been continuously studying all living things that exist on Earth. While many organisms live according to symbiosis or predator-prey relationships with a limited number of species, humans are studying all living organisms on a global scale and endeavoring to protect endangered species. In other words, it can be said that humans play a role in repairing damage to the biosphere. If we consider the whole Earth as one new living organism, we can surmise that human society is evolving in a direction that increases its complexity as a function responsible for adjustment and recovery of the Earth. A new relationship among variations in FuncCPA security is demonstrated—Would you tell us about your recent achievements in cryptography, which has been your area of expertise for many years? I’ve been involved in the research of public-key cryptography for many years, and a standard definition of cryptographic security has been established for quite some time. However, in new application environments, the need has arisen to define new security levels. Unlike when cryptography was used only for secure communication, in application environments in which data are processed while encrypted, such as in the case of fully homomorphic encryption, it has become necessary to extend and re-define security. Such extended and redefined security is called functional chosen-plaintext attack (FuncCPA) security, which has several variations. Clarifying the equivalence and non-equivalence of these variations in FuncCPA security is important from the viewpoint of future development of public-key-cryptography theory, so I am promoting research on FuncCPA security. The strengths of each variation of FuncCPA security are shown conceptually in Fig. 1, where FuncCPA is positioned at the center, and strength decreases downwards to the right. On the left half of the figure, FuncCPA is positioned centrally, its strengthened version (FuncCPA+) is positioned above, its one-way version (OW-FuncCPA) is positioned below, and the restricted version of FuncCPA (WeakFuncCPA) is positioned on the right. Although there are conceptual differences among the security levels of these four variations, we investigated the actual differences between the variations marked in red. The findings of the investigation are as follows. (i) FuncCPA and OW-FuncCPA are equivalent in security levels, and (ii) the relationship in security levels of the three variations, namely, FuncCPA → WeakFuncCPA → FuncCPA+ → FuncCPA, forms a closed loop. These findings prove that all four variations of FuncCPA are equivalent; that is, they offer the same level of security (strength). This result was unexpected and surprising even for us.

On the right half of the figure, the security levels for standard public-key cryptography, with indistinguishability under chosen-ciphertext attack (IND-CCA1) (strong) at the top and indistinguishability under chosen-plaintext attack (IND-CPA) (weak) at the bottom right, are shown. With security between these two levels, more subdivided security concepts with various intermediate security levels have been proposed. It was previously assumed that these two levels should be sufficient, but now usage environments requiring further subdivision have emerged. As a result of examining the targets marked in red in the figure, it was proven that the security levels for all the variations listed differ in a hierarchical manner. —Would you tell us about the things you value in your research activities and the current challenges you face? I always value originality, such as offering new perspectives and innovative methods, and strive to pursue research that has theoretical value and positively impacts the world. Most of all, it is important that I believe that the research I am pursuing is valuable. The complexity that I’ve been talking about is a research topic that I’ve been thinking about and nurturing in my mind as a personal interest for a long time, in fact, for about 30 to 40 years. Interestingly, the methodologies and knowledge that I’ve gained from my long-standing main profession—research on cryptography—have been extremely useful in my research on complexity. Ideas such as thinking about complexity in terms of probability distributions might not have come to my mind if I hadn’t studied cryptography. In that sense, I can say that my foundation in cryptography research is useful in many areas. As I mentioned earlier, I’m interested in the recent developments in AI. In particular, I’m wondering why the fundamental question “How has AI suddenly become much smarter by learning from massive amounts of data?” remains unanswered. Many people are approaching AI, which is a black box, from an engineer’s perspective that focuses on how to quickly master its use. The applications of AI are having a huge impact and may trigger a new industrial revolution. In the same way as the study of the steam engine, which brought about the 18th-century Industrial Revolution, gave rise to the physical theory of thermodynamics, new scientific theories would emerge from research that answer the question surrounding the above-mentioned leap in AI. I believe this question should be viewed from the perspective of “emergence” as a collective phenomenon observed in phase transitions and other areas of physics and believe that the answer is closely related to the increase in complexity. I hope that young researchers, with their fresh perspectives and bold approach, or anyone else in the world, will be able to develop new theories on the basis of questions like this one. References

■Interviewee profileTatsuaki Okamoto received a B.E., M.E., and Ph.D. from the University of Tokyo in 1976, 1978, and 1988. He has been working for NTT since 1978 and is an NTT Fellow. He is engaged in research on cryptography and information security at NTT Social Informatics Laboratories. He served as the director of Cryptography and Information Security Laboratories at NTT Research in USA from 2019 to 2022. He also served as president of the Japan Society for Industrial and Applied Mathematics (JSIAM), director of International Association of Cryptology Research (IACR), and a program chair of many international conferences. He received the best paper and life-time achievement awards from the Institute of Electronics, Information and Communication Engineers (IEICE), the distinguished lecturer from the IACR, the Purple Ribbon Award from the Japanese government, the RSA Conference Award for Excellence in Mathematics, and the Asahi Prize. |

|||||||||||||